The Digital Deception Matrix: How AI Personas Are Weaponizing Truth in 2025

Recent investigations into online manipulation have revealed a disturbing transformation in how false narratives spread across digital platforms. What began as simple fake profiles has evolved into a sophisticated ecosystem of AI-generated personas, deepfake technology, and coordinated manipulation networks that pose unprecedented challenges to evidence-based investigation and public discourse.

The Evolution of Digital Manipulation

The landscape of online deception has undergone a fundamental shift in recent years, moving far beyond the crude bot networks and obviously fake accounts of the past. According to comprehensive analysis of online personas, the digital manipulation ecosystem now operates across a spectrum ranging from authentic self-expression to sophisticated state-sponsored disinformation campaigns[1]. The implications for investigators, content creators, and the general public are profound and far-reaching.

The exponential growth of online manipulation from 2020-2025, showing the dramatic increase in AI bot traffic, deepfake incidents, and fake account removals

The data reveals an exponential growth in manipulation techniques that mirrors the advancement of artificial intelligence capabilities. AI-generated bot traffic has increased from 15% of web activity in 2020 to an estimated 58% by 2025, while deepfake incidents have grown from 50 documented cases in 2020 to over 5,500 projected for 2025[2]. This dramatic escalation represents more than a technological evolution—it signals a fundamental breakdown in the traditional markers of authentic human communication.

The Sophistication Gap

What makes current manipulation particularly insidious is the sophistication gap between detection capabilities and creation tools. Modern AI systems can now generate highly convincing personas complete with backstories, emotional responses, and the ability to maintain long-term relationships with followers[1]. These aren’t the obvious spam accounts of yesteryear; they’re carefully crafted digital identities that can fool even experienced investigators.

The technical barriers to creating convincing fake personas have collapsed dramatically. Where once sophisticated manipulation required significant resources and expertise, today’s AI tools democratize deception. As one recent analysis noted, creating a convincing deepfake now requires merely eight minutes and $11 worth of computing power[3]. This accessibility has transformed manipulation from a specialized activity into a mainstream threat.

The AI Influencer Industrial Complex

The emergence of AI-generated influencers represents perhaps the most concerning development in digital manipulation. These synthetic personas operate as fully functional social media personalities, complete with followers, brand partnerships, and monetization strategies[4][5]. Unlike traditional fake accounts created for specific campaigns, AI influencers establish ongoing relationships with audiences, building trust that can later be exploited for various purposes.

A realistic AI-generated virtual influencer with pink hair and flawless features, exemplifying the rise of digital personas in social media.

Recent investigations have uncovered sophisticated networks of AI influencers operating across multiple platforms. Spanish AI influencer Aitana Lopez, with over 300,000 Instagram followers, exemplifies this new category of digital deception[6]. These personas generate substantial revenue through brand partnerships, subscription services, and affiliate marketing, creating powerful economic incentives for their continued proliferation.

The most troubling aspect of AI influencer networks is their potential for weaponization. Research has identified coordinated campaigns where fake personas are used to promote conspiracy theories, not because their operators believe these theories, but because controversial content drives engagement and can be monetized through various fraudulent schemes[7]. This creates a perverse incentive structure where truth becomes secondary to engagement metrics.

The Authenticity Crisis

The proliferation of AI influencers has created what researchers term an “authenticity crisis” in digital spaces. When synthetic personas can generate more engagement than real people, the fundamental economics of social media platforms become distorted[8]. This distortion has profound implications for how information spreads and how audiences form beliefs about complex topics.

The challenge for investigators and content creators is distinguishing between genuine human expression and sophisticated artificial manipulation. Traditional verification methods—checking profile creation dates, analyzing posting patterns, looking for stock photos—are increasingly ineffective against AI-generated content that can pass these basic tests[1].

Deepfakes and the Erosion of Visual Evidence

The rapid advancement of deepfake technology has fundamentally altered the evidentiary landscape for investigators. What once constituted compelling visual evidence—photographs, videos, audio recordings—can now be artificially generated with sufficient quality to fool casual observers and even some experts[9][10].

Example of deepfake face swapping technology creating realistic face swaps in photos and videos.

The progression from simple face-swapping applications to sophisticated deepfake creation tools has been remarkably rapid. Current deepfake technology can generate convincing video content in real-time, enabling live impersonations that were impossible just two years ago[10]. This capability has particular implications for investigators dealing with witness testimonies, alleged evidence, and documentary materials.

The psychological impact of deepfakes extends beyond their direct use in deception. The mere knowledge that convincing fake media can be created undermines confidence in all digital evidence, creating what researchers call the “liar’s dividend”—a situation where the possibility of fabrication is used to discredit authentic evidence[9]. This erosion of trust in visual evidence poses particular challenges for those investigating claims that rely heavily on photographic or video documentation.

Case Studies in Deepfake Deception

Recent incidents demonstrate the real-world impact of deepfake technology. The cryptocurrency scam involving crypto influencer Scott Melker, where scammers used AI to generate convincing video calls and fake family accounts, resulted in losses exceeding $4 million[7]. The sophistication of this operation—combining deepfake video, AI-generated documentation, and coordinated social engineering—illustrates the evolution of digital fraud.

Similarly, the spread of false information during the 2024 UK riots, where deepfake-enhanced content contributed to real-world violence, underscores the potential for synthetic media to cause tangible harm[7]. These cases highlight the urgent need for improved detection methods and public education about synthetic media.

Conspiracy Theory Amplification Networks

The relationship between fake personas and conspiracy theory propagation has become increasingly sophisticated and systematic. Research analyzing over 80 incidents of foreign information manipulation found that conspiracy theories are now routinely amplified through coordinated networks of inauthentic accounts[11]. This amplification serves multiple purposes: generating engagement, creating the illusion of grassroots support, and providing plausible deniability for those spreading false information.

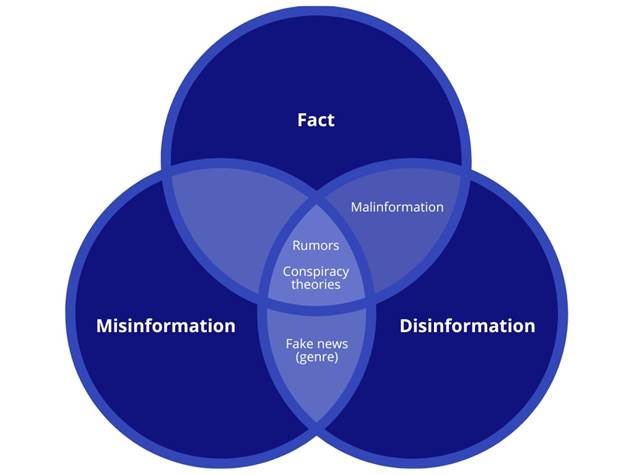

Venn diagram illustrating the relationships and overlaps between fact, misinformation, and disinformation, highlighting where conspiracy theories, rumors, malinformation, and fake news fit.

The mechanics of conspiracy theory amplification through fake personas follow predictable patterns. Initial false claims are seeded by anonymous accounts, then amplified by networks of AI-generated personas that provide apparent social proof for the claims[1]. This creates a feedback loop where false information appears to have widespread support, encouraging additional sharing and belief formation.

Analysis of the 5G-COVID conspiracy theory demonstrates these dynamics clearly. While the hashtag #5GCoronavirus was trending, research found that only about 35% of tweets came from accounts that genuinely believed the conspiracy. The remainder came from accounts engaging in “link baiting,” sharing humorous content, or otherwise participating in the conversation without necessarily endorsing the claims[12][13]. However, the net effect was to raise the profile of the conspiracy theory and create an impression of widespread controversy.

The Trust Erosion Mechanism

The systematic use of fake personas to amplify conspiracy theories creates a particularly insidious form of trust erosion. When audiences discover that some accounts promoting certain views are inauthentic, they may begin to question the authenticity of all accounts expressing similar views[1]. This generalized skepticism can paradoxically make people more susceptible to conspiracy theories, as they lose confidence in institutional sources of information.

For content creators and investigators, this presents a challenging dynamic. Exposing fake personas and their role in spreading false information is necessary, but it can also contribute to the broader erosion of trust in digital communications. The solution requires careful balance between maintaining appropriate skepticism and providing audiences with tools to evaluate information reliability.

Platform Responses and Regulatory Challenges

Social media platforms have implemented various measures to combat fake personas and coordinated manipulation, but these efforts have shown mixed results. Current detection systems rely heavily on behavioral analysis, looking for patterns that suggest automated or coordinated activity[1]. However, the sophistication of modern manipulation techniques increasingly allows bad actors to evade these detection methods.

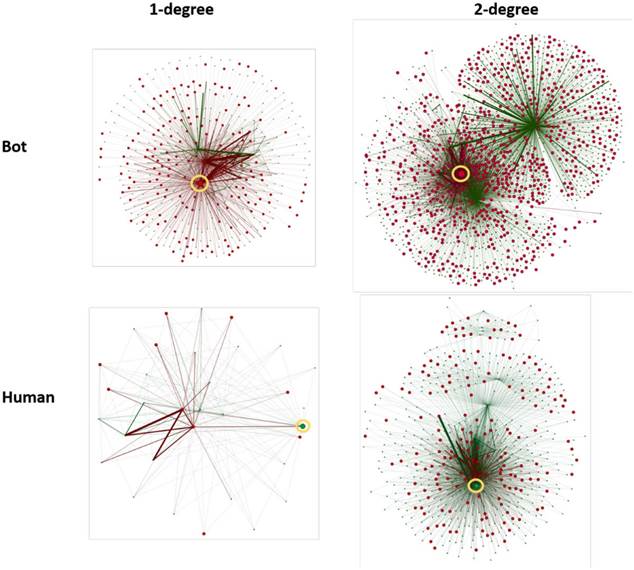

Comparison of social media bot and human network structures at 1-degree and 2-degree connection levels, highlighting differences in density and connectivity.

The challenges facing platform enforcement are substantial. Scale represents perhaps the biggest obstacle—with billions of accounts across major platforms, comprehensive human review is impossible. Automated detection systems must balance accuracy with efficiency, often resulting in false positives that affect legitimate users or false negatives that allow sophisticated manipulation to continue[1].

Recent regulatory responses, including the UK’s Online Safety Act and the EU’s Digital Services Act, represent attempts to address these challenges through legal frameworks. However, early implementation has revealed the complexity of regulating synthetic personas and coordinated manipulation[7]. The global nature of these operations often places them beyond the reach of national legislation, while the rapid evolution of techniques outpaces regulatory adaptation.

The Verification Dilemma

Platform verification systems have evolved from simple authentication tools to complex trust mechanisms, but this evolution has created new vulnerabilities. The transformation of verification badges from authenticity indicators to subscription services has undermined their utility as trust signals[7]. When fake personas can obtain verification badges, the entire verification ecosystem becomes compromised.

The challenge for platforms is developing verification systems that can accurately distinguish between authentic human users and sophisticated AI-generated personas. This requires not just technical solutions, but also policy frameworks that can adapt to rapidly evolving manipulation techniques.

Detection Methods and Investigative Approaches

The arms race between manipulation techniques and detection methods has driven significant innovation in investigative approaches. Modern detection systems combine multiple analysis techniques: behavioral pattern recognition, content analysis, network mapping, and linguistic analysis[1]. However, the effectiveness of these methods depends on their implementation and the sophistication of the manipulation being detected.

Technical approaches to detection have shown promising results in controlled environments, with some systems achieving over 97% accuracy in identifying fake accounts[1]. However, real-world implementation faces significant challenges. The dynamic nature of manipulation techniques means that detection systems must constantly evolve to remain effective.

Manual Verification Techniques

For investigators and content creators, manual verification remains an essential skill. Key techniques include reverse image searches, cross-platform consistency checks, temporal analysis of account activity, and assessment of engagement patterns[1]. However, these methods require significant expertise and time investment, making them impractical for casual users.

The development of AI-generated content that can pass basic verification tests has made manual detection increasingly difficult. Modern fake personas can maintain consistent identities across platforms, generate original content, and interact naturally with other users[1]. This sophistication requires investigators to develop more nuanced approaches to authenticity assessment.

Implications for Evidence-Based Investigation

The proliferation of sophisticated fake personas has profound implications for evidence-based investigation across all domains. Traditional investigative methods that rely on witness testimony, documentary evidence, and digital communications must be adapted to account for the possibility of synthetic content and artificial manipulation.

For investigators dealing with conspiracy theories, UFO claims, and paranormal phenomena, the challenge is particularly acute. These domains often rely heavily on witness accounts, photographic evidence, and social media documentation—all of which can now be artificially generated with high fidelity[1]. The burden of proof has shifted from simply documenting claims to establishing the authenticity of the documentation itself.

Methodological Adaptations

Effective investigation in the age of synthetic personas requires methodological adaptations that account for the possibility of artificial content. This includes implementing multiple source verification, maintaining detailed provenance records, and developing expertise in synthetic media detection[1]. Investigators must also be prepared to explain to audiences why certain types of evidence may be less reliable than in the past.

The challenge extends beyond technical verification to include audience education. When investigating claims, content creators must help audiences understand not just what evidence supports or refutes a claim, but how to evaluate the reliability of evidence in an environment where sophisticated fakes are possible.

Protection Strategies and Best Practices

Developing effective protection strategies against sophisticated manipulation requires a multi-layered approach combining technical solutions, educational initiatives, and community-based verification[1]. No single approach is sufficient; effectiveness depends on implementing comprehensive strategies that address both individual and systemic vulnerabilities.

For content creators, protection strategies must balance accessibility with accuracy. Audiences need practical tools for evaluating information reliability, but these tools must be usable by people without technical expertise. This requires developing educational approaches that build intuitive understanding of manipulation techniques without requiring deep technical knowledge.

Community-Based Verification

Community-based verification represents one of the most promising approaches to combating synthetic personas. By leveraging the collective intelligence of engaged communities, it becomes possible to identify and counter manipulation that might escape automated detection systems[1]. However, this approach requires careful design to avoid creating echo chambers or amplifying false information.

Successful community-based verification systems combine diverse perspectives with structured evaluation processes. They provide mechanisms for challenging claims, presenting counter-evidence, and building consensus around factual determinations. For communities focused on conspiracy theories and paranormal claims, these systems offer a way to maintain healthy skepticism while avoiding wholesale rejection of novel ideas.

The Future of Digital Authenticity

Looking forward, the challenges posed by sophisticated fake personas are likely to intensify before they improve. The continued advancement of AI capabilities, the democratization of manipulation tools, and the economic incentives for synthetic content creation all point toward an increasingly complex authenticity landscape[1].

The development of more sophisticated detection methods offers some hope, but the fundamental challenge remains: in a world where convincing fake content can be created rapidly and cheaply, how do we maintain systems of trust and verification that enable productive discourse? The answer likely lies not in perfect detection systems, but in resilient communities that can adapt to changing threat landscapes.

Emerging Technologies and Threats

Several emerging technologies will likely reshape the manipulation landscape in the coming years. Advanced language models are becoming capable of maintaining long-term personas with consistent personalities and memories[14]. Improvements in video synthesis may soon enable real-time deepfake generation suitable for live interactions[9]. And the integration of these technologies with social media platforms could create new forms of manipulation that are currently difficult to imagine.

At the same time, detection technologies are also advancing. Blockchain-based provenance systems may enable better tracking of content authenticity[1]. Biometric verification could provide stronger identity assurance. And machine learning approaches to detection continue to improve in accuracy and speed.

Conclusion

The rise of sophisticated fake personas represents a fundamental challenge to evidence-based investigation and public discourse. While the technical capabilities for creating convincing synthetic content continue to advance, so too do our understanding of these threats and our ability to counter them. The key lies in maintaining appropriate skepticism without falling into paranoid rejection of all digital communication.

For investigators, content creators, and engaged citizens, the path forward requires combining technical literacy with methodological rigor. We must develop the skills to evaluate digital evidence critically while maintaining the openness necessary for productive inquiry. This balance—between skepticism and engagement, between caution and curiosity—will determine whether digital spaces can continue to serve as venues for meaningful investigation and discourse.

The stakes in this endeavor could not be higher. In an era where conspiracy theories can spark real-world violence and where public trust in institutions continues to erode, the work of identifying and countering digital manipulation becomes not just a technical challenge, but a civic responsibility. The future of evidence-based investigation depends on our collective ability to navigate this new landscape with both wisdom and determination.

The digital masquerade is real, sophisticated, and growing. But with proper preparation, methodical approaches, and commitment to truth over convenience, it remains possible to separate authentic human expression from artificial manipulation. The pursuit of truth has never been more important, or more challenging, than it is today.

- Online-Personas_-The-Good-The-Bad-and-The-Decept.docx

- https://arxiv.org/abs/2503.14828

- https://revistamentor.ec/index.php/mentor/article/view/8740

- https://www.revistahematologia.com.ar/index.php/Revista/article/view/649

- https://publications.ascilite.org/index.php/APUB/article/view/1088

- https://repositorio.cientifica.edu.pe/handle/20.500.12805/3729

- https://arxiv.org/pdf/2502.11078.pdf

- https://pmc.ncbi.nlm.nih.gov/articles/PMC10805610/

- https://arxiv.org/pdf/2409.05257.pdf

- https://pmc.ncbi.nlm.nih.gov/articles/PMC11789697/

- http://arxiv.org/pdf/2502.11827.pdf

- https://dl.acm.org/doi/pdf/10.1145/3613904.3642036

- https://arxiv.org/html/2503.16527

- https://www.forbes.com/sites/neilsahota/2024/07/29/the-dark-side-of-ai-is-how-bad-actors-manipulate-minds/